Essay

Why is the smart meter silent? Defeating collisions in NB-IoT networks

IoT networks were designed for millions of devices, but they are already chocked with thousands. When the lights blinked for a second in our area, 10,000 smart meters simultaneously lost contact and began...

IoT networks were designed for millions of devices, but they are already chocked with thousands. When the lights flickered for a second in our area, 10,000 smart meters simultaneously lost contact and began to reconnect. Three quarters never made it to air. The problem is in RACH - random access channel. With mass connections, it turns into a bottleneck where everyone tries to break through first.

My name is Maxim Knyazev, senior systems engineer K2 Cybersecurity , and I have trained five AI agents to manage this chaos. One predicts load peaks, another distributes time slots, a third controls transmission power, a fourth distributes devices by type, and a fifth optimizes battery consumption. As a result, the number of collisions dropped from 26% to 7%, energy consumption by 35%, and connection success increased to 96% compared to using a static agentless method. Below the cut I’ll tell you how it works.

The main culprit of all the troubles is RACH, Random Access Channel. In Russian - random access channel. It works simply: when an IoT device has data, it does not wait for permission from the base station. It simply selects a free time slot and tries to transmit the signal. This is simple and effective, but only until all devices try to communicate at the same time.

There is no queue or schedule at RACH - whoever is in time takes the channel. Like the crowd after a football game that is pouring out of the stadium. If, for example, two water meters fall into the same time slot, their signals overlap each other. The base station hears only unintelligible noise and cannot decipher messages. This is a collision - the main headache of any IoT network during mass connections.

Now imagine that there are thousands of such devices. After one unsuccessful connection attempt, the smart meter does not give up. He is waiting for a slot to open up so he can knock on it again.

There is a vicious circle: more collisions → more retries → higher load on the channel → even more collisions. The system is eating itself. Delays increase from several seconds to tens of minutes, connection success drops to 70%, and sometimes even lower. In addition, each attempt burns up battery power.

From brute force to glimpses of intelligence

The first solution that comes to mind is Access Class Barring. The system simply prohibits some devices from communicating in order to unload the channel. The solution is working, but it’s like treating a headache with a guillotine - rough and with side effects. ACB does not differentiate the importance of signals. The gas leak sensor and the weather station are equal for it - both can go overboard with the same probability.

I decided to approach the problem differently. I took reinforcement learning - the same Reinforcement Learning that trains bots to play chess. Only I taught agent management flow of IoT devices through Access Class Barring and RACH slot allocations. Reduced collisions by 10% - get +10 points, increased latency by 20 ms - get -5. This way the agent learns to maximize the reward in the long run.

Compared to static configuration,

single RL agentreduced the number of collisions by 74%, increased connection success by 16% and improved device energy efficiency by 15%. It would seem that the problem was solved, but when I complicated the testing scenarios and brought them closer to reality, problems began.

The agent excelled at solving one problem—minimizing collisions—but reality turned out to be more complex than the crude model.

-

The first problem is the heterogeneity of devices. In the same network live temperature sensors that send data once an hour, and gas leak sensors that are silent for months, but when triggered they should break through instantly. The agent averaged them all and often ignored critical but rare signals.

-

The second is load fluctuations. A business center during the day and a residential area at night generate completely different traffic patterns. The agent reacted to the current situation, but could not predict what would happen in an hour.

-

Thirdly, energy consumption restrictions. You can reduce collisions by forcing devices to transmit more data or synchronize with the network more frequently. But this will kill the batteries faster. Minimizing delays and saving energy pull the system in opposite directions.

I realized that with this decision I had reached the ceiling: I required one agent to be a strategist, a tactician, and an energy manager at the same time. He managed, but with difficulty - like a juggler to whom more and more balls are thrown.

The solution suggested itself: divide the task between several specialized agents. Thus was born the architecture that ultimately solved the problem.

"Ocean's Five": multi-agent system

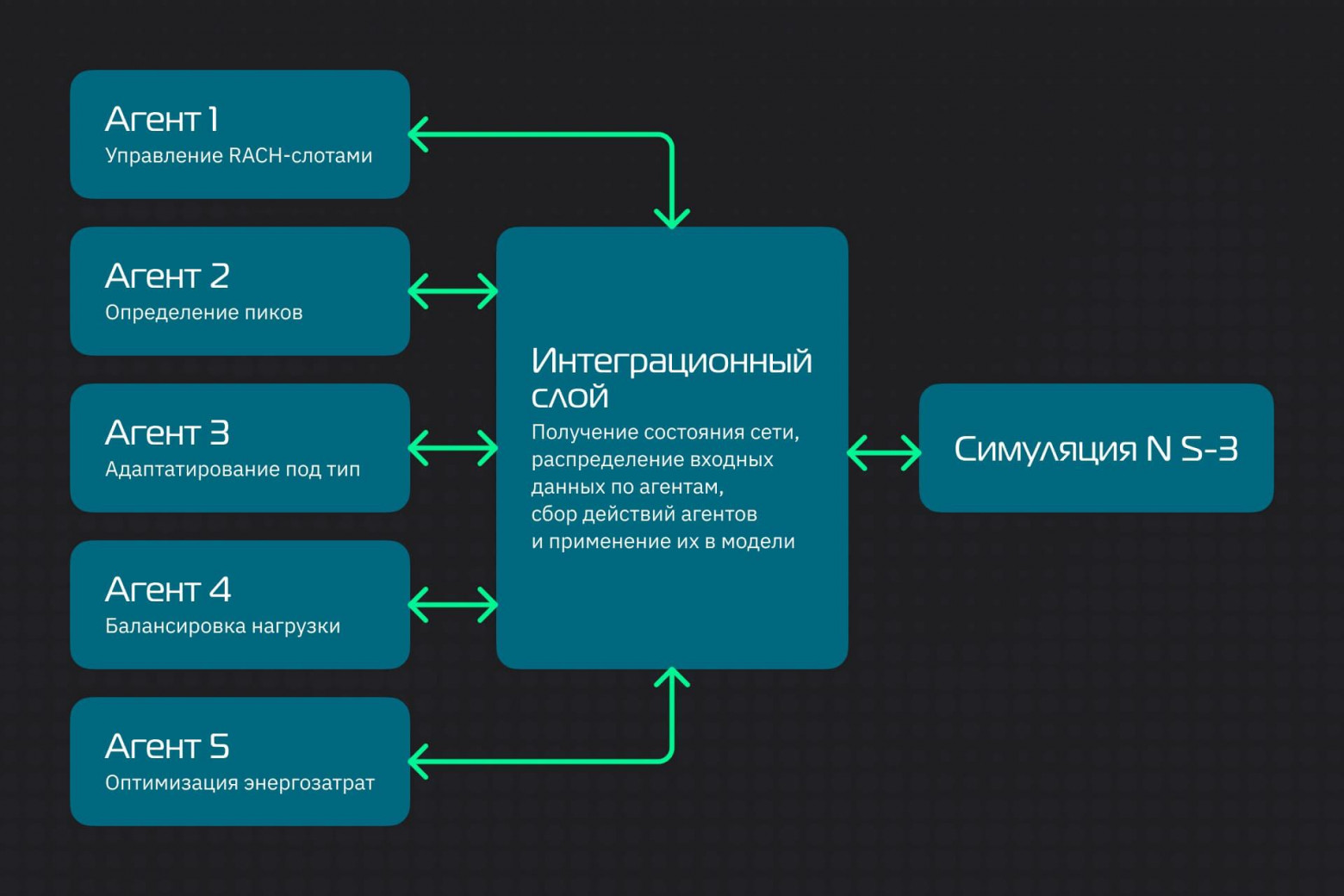

We had to split the problem into five independent subtasks and assign each to a separate RL agent.

To make them work as a single organism, I created an integration layer on top of the NS-3 simulator. It collects a complete picture of the network state and sends only relevant information to each agent. The integration layer is responsible for ensuring that solutions do not conflict with each other.

Agent 1: RACH slot manager

The first agent works in real time using two metrics: the number of collisions and the percentage of successful connections. Many collisions - increases the number of RACH slots to relieve the channel. The channel is idle - it reduces slots, saving resources. Pure tactics without strategy: he reacts to the situation without trying to predict it.

Agent 2: Peak Load Predictor

Unlike the Dispatcher, the Forecaster works proactively. Analyzes time series and identifies patterns: morning activation of office sensors at 9:00, night collection of data from meters at 3:00, peaks by day of the week. A few minutes before the predicted surge, it sends a signal to other agents to prepare additional resources. Classic predictive control based on historical data.

Agent 3: Traffic Classifier

He distributes the mustache triplets in three categories: periodic (weather stations with regular transmission), critical (gas leak sensors - rare, but urgent) and background (telemetry collection). For each category, it configures its own ACB parameters and allocates resources. When a critical signal occurs, temporarily blocks background traffic. Provides QoS without channel congestion.

Agent 4: Load Balancer

Works with spatial distribution of traffic between cells and sectors. Monitors the load of each base station: number of requests, collision level, signal quality. When one station is overloaded and the neighboring one is idle, this agent redistributes the load by adjusting handover parameters and dynamically allocating RACH slots between sectors.

Agent 5: Energy Manager

Optimizes battery consumption. Analyzes the number of connection retries, signal levels and current power saving modes (PSM, eDRX). When the device spends too much energy on unsuccessful attempts, it adjusts the parameters: either limits the number of repetitions or increases the sleep periods between attempts. Its goal is to achieve maximum battery life while maintaining connectivity.

Sandbox for AI: building a digital city for testing

Agents needed a realistic training environment—a digital twin of the cellular network—where they could practice “skills” without risking the actual infrastructure.

To do this, I used Network Simulator 3 version 3.35 with full support for NB-IoT according to the 3GPP Release 13 specification. Imagine a game engine like Unreal Engine, which, instead of graphics, recreates with manic accuracy the physics of radio wave propagation, the logic of network protocols and the behavior of thousands of devices - this is NS-3.

The agents worked with a detailed emulation of the NPRACH physical channel with all procedures for generating preambles and establishing connections. NS-3 made it possible to control the same parameters that are available to a real operator: the number of RACH slots, the ACB coefficient, the maximum number of transmission repeats.

The simulator is written in C++, and the RL agents are written in Python. To communicate between them, I used the ns3-gym framework, which exposes the simulator as an OpenAI Gym environment.

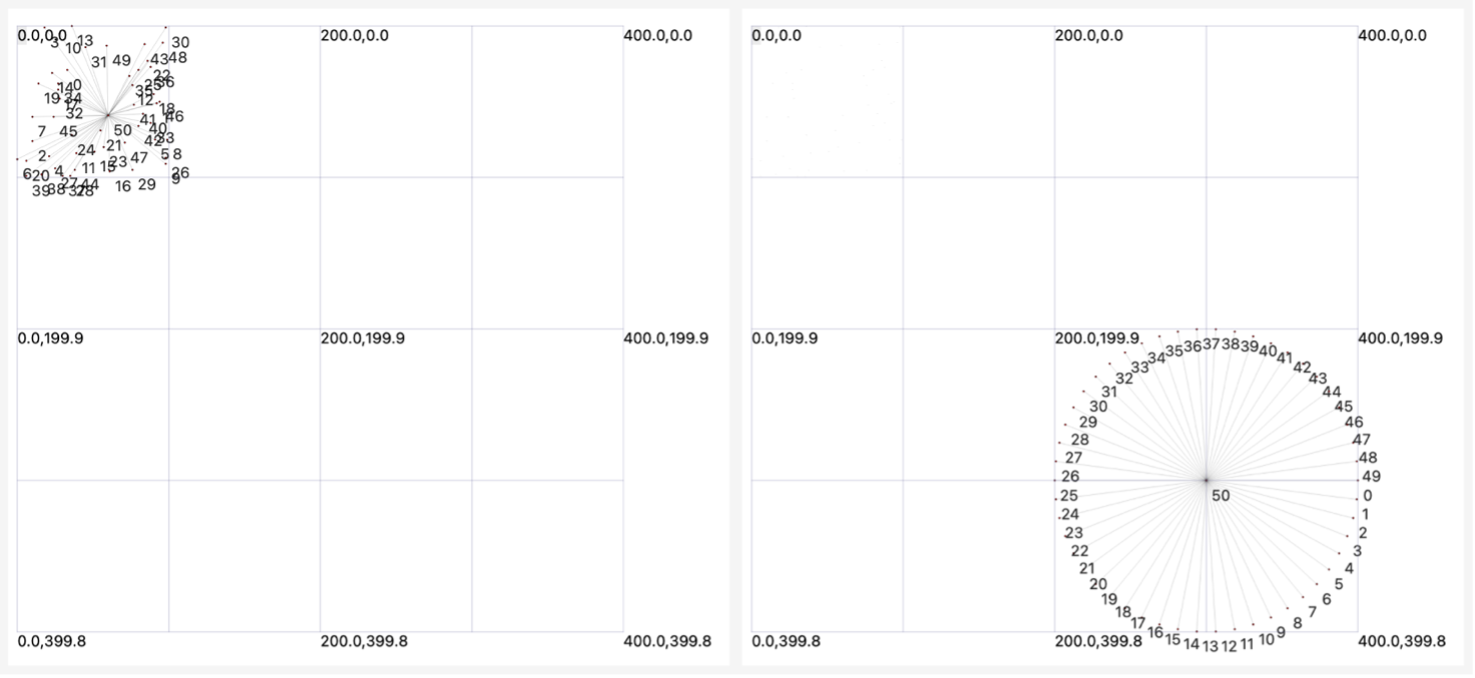

Visualization of network topology

It works like this: the simulator transmits the network state (collisions, loading, delays) through the ns3-gym framework in Python. Agents process the data and return decisions - for example, change the number of RACH slots or increase the pause between attempts. ns3-gym translates the commands back to C++, the simulator applies the new parameters and continues running. The Observe-Decision-Action loop repeats at every step of the simulation, allowing agents to learn in real time.

Each agent has a learning algorithm tailored to its task.

For the Dispatcher (agent 1), Classifier (agent 3) and Balancer (agent 4) I chose DQN (Deep Q-Network). This algorithm is well suited for problems with a discrete action space: choosing the number of RACH slots (4, 6, 8 or 10), setting the ACB coefficient (0.5, 0.7, 0.9) and so on.

The energy manager works with a continuous action space (transmit power from 0 to 100%, sleep periods from 0.1 to 10 seconds

d), and must regulate them smoothly. The optimal choice for him is DDPG.

The training took place in episodes of one hour of simulation time. At the beginning of each episode, new device configurations and traffic profiles were generated - this is how agents learned to adapt rather than memorize one scenario. After 500 episodes, the metrics stabilized: collisions and delays reached a plateau.

After capturing the trained policies, I ran benchmark tests against the baseline methods and the single-agent system.

Learning outcomes

I wanted not just to test agents under some average conditions, but to compare two contrasting scenarios. The point is that different agents can behave differently depending on the conditions.

The first scenario was conceived as a simulation of a high-load urban network: 1 thousand devices in an area of 1 kilometer. Its key feature is synchronous load peaks every hour, when most devices simultaneously transmit data. In essence, this is a stress test for operation under conditions of extreme channel overload.

The second scenario is conventionally rural. This is an area with a radius of 10–15 kilometers, where there are only 50 devices. There is no heavy load here, but long distances mean a weak signal. The agents' task is to achieve energy-efficient data transmission, since each repeated transmission reduces the battery life of the sensors.

I compared three approaches:

-

Static configuration (base method).

-

Single-agent RL system (our previous development).

-

Multi-agent system (five specialized agents).

|

Model |

Approach |

Collisions (%) |

Successful connections (%) |

Delay (s) |

Energy (mJ) |

|

City |

Static |

26 |

70 |

~5 |

5.5 |

|

|

Single-agent |

15 |

85 |

2.5 |

4.6 |

|

|

Multi-agent |

7 |

96 |

1.5 |

3.6 |

|

Village |

Static |

4 |

90 |

1.8 |

2.0 |

|

|

Single-agent |

1.5 |

95 |

1.3 |

1.8 |

|

|

Multi-agent |

~1 |

98 |

1.0 |

1.4 |

Let's break down these results.

Testing in the "city"

A team of five agents managed to manage the spectrum under peak load conditions. Let's look at specific indicators.

-

Collisions decreased fourfold.The static system allowed collisions in 26% of cases - every fourth device collided with a neighbor when attempting to transmit. Single agent reduced this figure to 15%. A team of agents brought it to 7%. Now the probability of a collision when going on air has decreased by almost four times compared to the static distribution.

-

Successful connections increased to 96%.Almost all devices communicated with a minimum number of attempts. In the context of mass connectivity, this level of reliability was previously unattainable.

-

The delay was reduced from 5 to 1.5 seconds.There was an almost instantaneous response. In critical applications, this difference can be very important.

-

Energy consumption dropped by 35%.Only 3.6 mJ per successful transfer versus 5.5 mJ for static control. For battery-powered devices, this means months of extra use on a single charge.

Testing in the "village"

In a scenario with low device density, the static system initially performed acceptably, but the multi-agent approach proved that there was room for improvement even in such conditions.

-

Collisions decreased to 1%. Almost complete absence of conflicts during data transfer. For comparison, the static system produced 8% collisions even with low device density.

-

The connection success rate reached 98%. Almost all devices established communication on the first try.

-

Energy consumption is the main achievement. Only 1.4 mJ per transfer versus 2.1 mJ for the static system. The 33% reduction was achieved through the work of the Energy Manager (agent 5), which configured sleep modes and minimized the number of retransmissions for devices with weak signals.

Why the multi-agent approach works

Such high performance is achieved thanks to the division of functions between specialized agents. In the urban scenario, the Predictor (Agent 2) predicted traffic surges, and the Dispatcher (Agent 1) redistributed resources in advance to accommodate the growing load. In parallel, the Traffic Classifier (agent 3) reserved channels for critical messages, preventing them from being blocked by normal traffic.

After training, I measured the pairwise correlation of rewards between agents - it was 0.5. This means that agents have learned to coordinate actions: they do not conflict with each other and do not make contradictory decisions. The system works as a single whole, and not as a set of independent algorithms.

Experiments have confirmed that distributing functions among specialized RL agents provides measurable performance gains. The method showed a reduction in collisions by 3-4 times, a reduction in delays by 3 times and energy savings of 30-35% compared to static spectrum management in NB-IoT networks.

Next steps

The main conclusion of this project is that the complex problem of mass access management in NB-IoT is more effectively solved through decomposition into specialized subtasks.

A multi-agent approach using reinforcement learning has been shown to be effective in simulation. Now we have to test and adapt the technology for real operating conditions. In the NS-3 simulator, agents worked under controlled conditions. Real base stations will experience radio interference, hardware failures, and unpredictable device behavior. This will show how robust the algorithms are to real operating conditions.

Another critical issue is the system's resilience to failures of individual components. Need to test the script and agent failures and develop compensation mechanisms. The system must continue to operate even if one or more agents fail, albeit with performance degradation.

There is also a need to explore robustness to corrupted input data and develop self-diagnosis mechanisms for early detection of problems. I am planning to add a security agent to the system. The current architecture does not distinguish between legitimate traffic bursts and targeted attacks. The new agent will analyze network behavior patterns, detect anomalies and block attempts at DDoS attacks or other types of interference.

There is still a lot to be done, but now it is clear in which direction to move. The results obtained are a real way to improve the efficiency of the Internet of Things.

Discussion

Comments

Comments are available only to confirmed email subscribers. No separate registration or password is required: a magic link opens a comment session.

Join the discussion

Enter the same email that you already used for your site subscription. We will send you a magic link to open comments on this device.

There are no approved comments here yet.